Architecture Components

Kenogrammatic States

Polycontextural Computational State

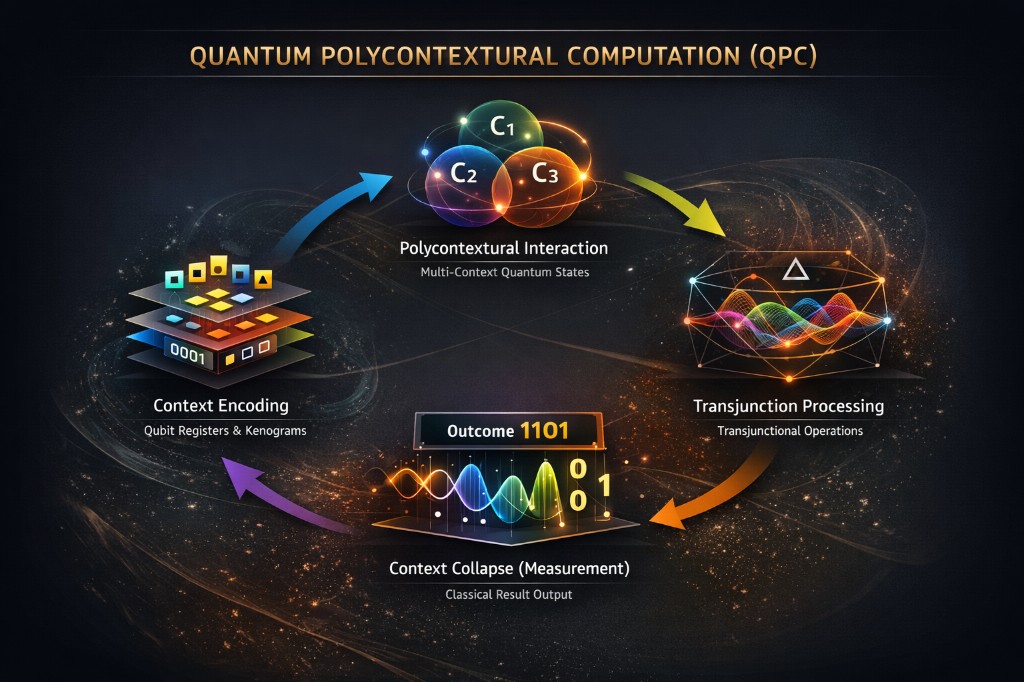

In Quantum Polycontextural Computing (QPC), the fundamental computational entity is not a binary bit or a single quantum state but a polycontextural configuration consisting of multiple interacting logical contextures. A QPC computational state can be described as a structured set Ψ = {C₁, C₂, C₃ … Cₙ} where each Cᵢ represents an independent logical contexture. Each contexture contains a configuration of quantum states and contextual relations that may coexist simultaneously with other contextures. Unlike classical systems, these contextures are not mutually exclusive. They may exist concurrently and interact through quantum operations.

Morphogrammatic Operations

Contextural Encoding

Within each contexture, information is represented through kenogrammatic and morphogrammatic structures rather than Boolean truth assignments. These structures encode relational patterns between quantum states rather than fixed logical values. Thus computation operates on patterns of contextual relations, not on binary variables.

Transjunctional Gates

Transjunctional Operations

The fundamental transformation in QPC is the transjunctional operation. A transjunction transforms relationships between contextures rather than individual quantum amplitudes. Formally this can be described as a transformation T : Ψ → Ψ' where the mapping reorganizes contextual relations across the contextural network. These transformations allow: contextual interference; multi-layer logical interaction; structured coexistence of contradictory states.

Quantum Physical Realization

The polycontextural structures are embedded into physical quantum systems through contextural encoding circuits. These circuits map contextures onto qubit registers while preserving contextual relations through entanglement and interference. Because QPC operates at the logical architecture layer, it can be executed on multiple quantum hardware platforms including: superconducting qubits, trapped ions, neutral atoms, photonic systems.

Contextural Collapse (Measurement)

Observation of a QPC system produces a contextural collapse. During measurement, the polycontextural configuration Ψ collapses into a classical observable configuration: Ψ → Ω, where Ω represents the measurable classical output derived from the contextual superposition. The collapse does not eliminate contextual structure during computation; it only appears at the measurement boundary.

Computational Implication

Because QPC allows simultaneous existence and interaction of multiple logical contextures, it enables representation of systems that are difficult or impossible to model within single-context computational frameworks. This includes: complex systemic interactions; multi-layer decision structures; context-dependent optimization problems; large-scale network cascade dynamics.

QPC Process: Context Encoding → Polycontextural Interaction → Transjunction Processing → Context Collapse (Measurement)